Invocation-Level Analysis

Most security tools check whether an agent can call a tool. INS inspects what each specific call will do at runtime. Same tool, different parameters, different risk. Every invocation passes through a multi-stage pipeline of anti-evasion normalization, threat detection, adaptive intelligence matching, policy enforcement, and per-argument scanning.

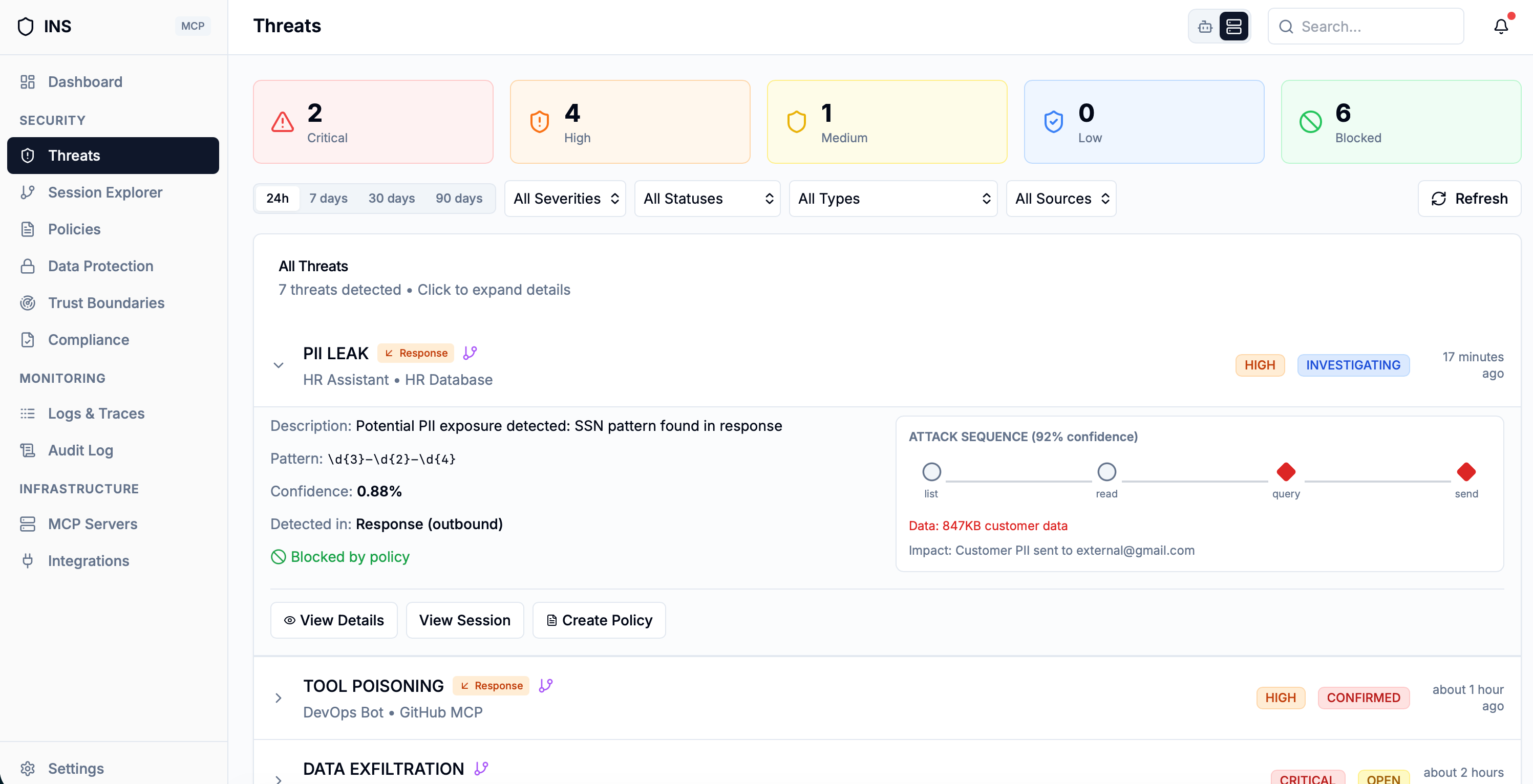

Beyond Access Control: Analyzing Every Call

Traditional security models for AI agents focus on access control: can this agent call this tool? But this coarse-grained approach misses a critical reality. A database query tool that is perfectly safe for SELECT * FROM products becomes dangerous when called with DROP TABLE users. A file reader that is benign for /docs/readme.txt is a security breach for /etc/shadow.

INS treats every tool invocation as a unique security event. The platform processes the actual payload of every request -- the tool name, method, parameters, and raw content -- through a full threat detection pipeline. This is not a static permission check; it is runtime content analysis of every tool call as it happens.

The system records which detection layers were activated, whether adaptive threat intelligence matched, and the final decision outcome for complete forensic traceability. Every decision is observable through traced spans in the request inspector.

Anti-Evasion: Text Normalization Pipeline

Before any detector sees the payload, INS runs a comprehensive normalization pipeline that defeats obfuscation techniques attackers use to bypass keyword-based detection.

NFKC + Homoglyph Resolution

Unicode NFKC normalization strips invisible characters and resolves homoglyphs (Cyrillic "a" to Latin "a"), defeating token smuggling attacks that exploit Unicode lookalike characters.

Defragmentation

Collapses fragmentation obfuscation: "con-fi-den-tial" becomes "confidential," "S.y.s.t.e.m" becomes "System," and bracket-concatenated "[Ig]+[nore]" is extracted and appended.

Leet-Speak Normalization

Converts leet-speak substitutions back to plain text: 0 to o, 3 to e, @ to a, and so on. This ensures that "1gnor3 pr3v10us" is correctly detected as "ignore previous."

The original payload is preserved in the originalPayload field for audit and forensic purposes before normalization is applied.

Threat Classes Covered

Multi-Layered Threat Pipeline

After normalization, INS evaluates the request payload through a suite of specialized detection layers, each targeting a different class of AI-specific threat. The system records exactly which layers were activated for every request, giving your SOC complete visibility into how each decision was reached.

High-confidence detections trigger an immediate block -- the pipeline short-circuits and returns a blocked result without running remaining checks. This fail-fast behavior ensures that clear threats are stopped with minimal latency.

Lower-confidence signals are retained as soft evidence for correlation. This is a critical anti-evasion mechanism: an attacker might craft a payload that evades any single detection layer, but when multiple layers each register a subtle signal, the correlated evidence still triggers a block.

Signal Correlation: Catching Sophisticated Evasion

When multiple detection layers each produce a subtle signal, INS correlates them into a combined confidence score. The more independent layers fire on the same request, the stronger the evidence. Once the correlated signal crosses the risk threshold -- or enough layers fire simultaneously -- the request is blocked.

This approach catches sophisticated attacks designed to evade any single detection method. For example, a payload might contain a mild SQL fragment, a suspicious URL pattern, and a subtle instruction-override phrase -- each ambiguous in isolation. Correlated together, they form a clear attack pattern.

The correlated result identifies the strongest signal's threat type and severity, lists every contributing indicator, and provides a descriptive reason that explains the multi-layer correlation to operators reviewing the block.

How Correlation Works

Collect Soft Signals

Sub-threshold indicators from every detection layer are retained as evidence

Correlate Evidence

Multiple weak signals are aggregated into a unified risk score

Risk-Based Decision

Block when correlated evidence crosses the risk threshold or enough layers fire in concert

Block with Full Context

Result lists every contributing signal with a descriptive correlation reason

Adaptive Threat Intelligence

Beyond signature-based detection, INS maintains a living intelligence library of previously observed attacks. Every incoming request is compared against this library using semantic similarity -- not exact matching -- so rephrased, obfuscated, or restructured variants of known attacks are still caught. If a request resembles a previously seen threat above the configured risk threshold, it is blocked even when no individual rule fires.

Semantic Similarity Matching

Goes beyond keyword and regex rules to recognize attacks by their meaning and structure, not their exact text. Each stored threat is tagged with its type, severity, and origin so operators have full forensic context when a near-match is blocked.

Self-Learning Defense

Every new attack blocked by the detection layers is enrolled into the intelligence library automatically. Future variations -- even when the attacker rewords the payload -- are caught on sight. Your defenses get stronger with every attempted attack.

Per-Argument Value Scanning

For tools/call requests, INS goes beyond scanning the full payload. Each individual argument value is extracted and independently re-evaluated through the full detection pipeline. This is Gate 2 of the three-gate security model.

Why does this matter? A prompt injection attack might be diluted below detection threshold when mixed into a large payload, but when the same attack string is isolated as a single argument value, it becomes clearly detectable. Per-argument scanning eliminates this dilution evasion technique.

When any individual argument is blocked, INS logs the specific parameter name, the tool name, and the block reason. The threat is persisted for analysis, and the entire request is rejected. This granular approach provides precise forensic information about exactly which parameter contained the threat.

Three-Gate Security Model

-

1Full Payload Scan

All detection layers, adaptive threat intelligence, and policy enforcement evaluate the complete raw payload.

-

2Per-Argument Value Scan

Each tool argument is extracted and scanned independently through the full detection pipeline.

-

3Canary Leak Detection

Unique canary tokens injected into requests are checked in responses to detect prompt leakage.

How It Works

Normalize

NFKC normalization, homoglyph resolution, defragmentation, and leet-speak conversion strip obfuscation from the payload. The original is preserved for audit.

Detect

Specialized detection layers evaluate the normalized payload. High-confidence hits block immediately. Weaker signals are retained for correlation.

Intelligence Match

The adaptive threat intelligence library compares the payload against previously observed attacks using semantic similarity, catching rephrased and obfuscated variants.

Enforce

Policy evaluation runs last. Policies can ALLOW, DENY, require approval, rate limit, or trigger DLP masking. The final decision is traced end-to-end.

Related Features

Tool Poisoning Detection

Invocation-level analysis complements tool description scanning by analyzing the actual content of every tool call at runtime.

Policy Engine

Policy enforcement runs after threat detection in the invocation pipeline, providing fine-grained control with ALLOW, DENY, and REQUIRE_APPROVAL actions.

Analyze Every Tool Call, Not Just Permissions

Join the waitlist to get early access to INS and protect your AI agents with runtime invocation-level analysis that goes beyond static access control.

Join the Waitlist